Semantic Layer for Embedded AI Analytics: Why It Matters for SaaS

Alim Goulamhoussen

Publié le 17.02.26

Mis à jour le 16.04.26

7 min

Résumer cet article avec :

Self-service AI analytics has always been the promise. Let users ask questions, get answers, make better decisions — no analyst required. AI chat makes that promise feel closer than ever. But there's a gap between a chat widget that looks impressive in a demo and one that your customers actually trust in production.

That gap has a name: the semantic layer.

This is one piece of a larger picture — our complete guide to AI-powered analytics covers the full architecture, from NLP to visualization.

If you're building a SaaS product and considering embedding an AI analytics chat for your customers, understanding what a semantic layer does — and what happens without one — might be the most important architectural decision you make.

Key Takeaways

Without a semantic layer, embedded AI analytics returns confident but incorrect answers — wrong numbers that erode customer trust.

A semantic layer translates raw database schema into business concepts: it defines what 'turnover' means, which columns to use, and what filters apply by default.

In multi-tenant SaaS products, the semantic layer also enforces data isolation — ensuring one customer's AI queries never surface another tenant's data.

You define the semantic layer once, during integration. Every end user who opens the AI chat benefits from that context automatically.

Toucan is an embedded AI analytics platform for SaaS product teams. Its AI-assisted semantic layer configuration automatically maps database columns to business concepts, reducing setup time to hours rather than weeks.

The gap between raw data and business language

Let's say you're building an HR SaaS platform. Your database stores employee records, contract types, absence events, payroll data. The tables have names like employee_events, contract_lines, absence_records. Columns are labeled evt_type, dept_id, is_active.

Now imagine your customer — an HR director — opens the AI chat in your product and asks: "What's our turnover rate by department this quarter?"

This is conversational analytics in action — and the semantic layer is what makes those questions answerable correctly.

To answer correctly, the AI needs to know that "turnover" means employees who left voluntarily, that "this quarter" refers to the current fiscal period your customer uses, that evt_type = 'resignation' is what counts, and that is_active = false combined with a departure date is the right filter. None of that is written anywhere in your database schema.

Without a semantic layer, the AI is guessing. It might return a number — it will return a number, confidently — but that number might count all departures including layoffs, or use the wrong date field, or miss employees on leave. Your customer makes an HR decision based on bad data. That's not an AI problem. That's an architecture problem.

Why embedded AI makes this harder than internal BI

In a traditional internal BI setup, there's always a data analyst in the loop. They can catch a wrong query, add context, iterate. When the AI misunderstands something, someone notices and fixes it.

When you embed an AI chat in your SaaS product, that safety net disappears. Your customers use the chat on their own. They're not data people — they're HR directors, finance managers, operations leads. They trust that the AI understands their data the way they understand their business. And when it doesn't, they don't file a bug report. They stop using the feature. Or worse, they make decisions on bad numbers.

This is what makes embedded AI fundamentally different: you're deploying the AI into contexts you don't control, for questions you can't predict, to users who won't verify the output.

The stakes are higher. The margin for error is lower. And the AI needs a much stronger foundation to stand on.

The practical consequence for SaaS product teams is significant. When an embedded AI feature fails to answer correctly, the failure is invisible at first the user gets a number, not an error. They may act on it, share it with their manager, or build a report around it. The problem surfaces weeks later, if at all. By then, the damage to trust is done. This is why the semantic layer cannot be an afterthought: it needs to be the first thing you configure, not the last.

What a semantic layer actually does

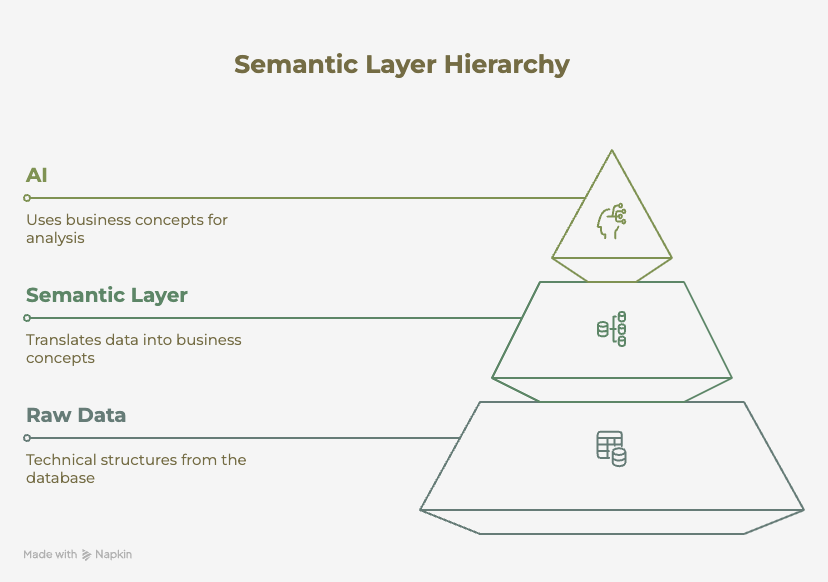

A semantic layer sits between your raw database and the AI. It's the translation layer that converts technical data structures into business concepts.

In practice, it does three things:

It defines what things mean. "Turnover" means voluntary departures. "Headcount" means active employees on payroll today, excluding contractors. "Absence" means approved leave events, not sick days under 3 days. These definitions live in the semantic layer — not in the AI's training data, not in your database schema.

It standardizes how things are named. Your database might have emp_departure_date, contract_end_dt, and offboarding_timestamp — three columns that all relate to when someone leaves. The semantic layer unifies these into a single concept the AI can reason about consistently.

This is what allows natural language query to work reliably — the AI doesn't have to guess which field means what.

It handles the implicit context. When an HR director asks about "our team," they mean their company's employees, not a raw join across all tenants in your multi-tenant database. The semantic layer encodes those filters and scoping rules so the AI never surfaces one customer's data to another.

Without this layer, the AI is working with raw schema — and raw schema was designed for machines, not for the business conversations your customers want to have.

How Toucan AI approaches this

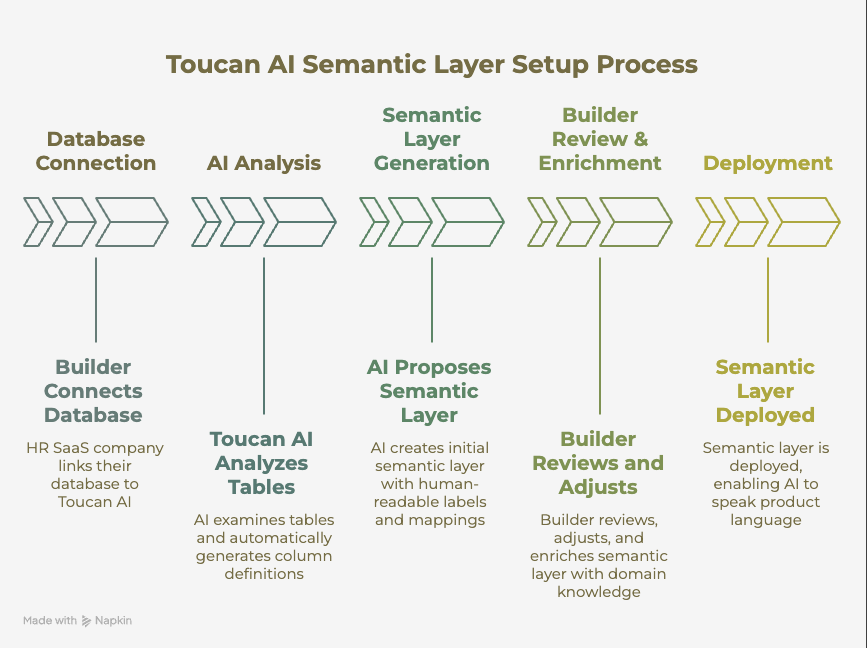

When a builder connects their database to Toucan AI, the setup process includes an AI-assisted semantic layer configuration. Toucan AI analyzes the connected tables and automatically generates definitions for each column — what it likely represents, how it maps to business concepts, what its values mean.

Column names get standardized into human-readable labels. dept_id becomes "Department." evt_type becomes "Event Type" with its values mapped to plain language. The AI proposes a first version of the semantic layer that the builder can review, adjust, and enrich with their own domain knowledge.

This matters because the builder — the HR SaaS company — knows their data better than anyone. They know that in their product, "active employee" means status = 'confirmed' AND contract_type != 'intern'. They can encode that logic once, in the semantic layer, and every customer who uses the AI chat benefits from it immediately.

The result is an AI that speaks the language of your product — not the language of your database.

Define context once, deploy everywhere

This is the builder's real leverage. You invest in the semantic layer once, during the integration phase. You define what your key metrics mean, how your data is structured, what filters apply by default. And then every customer who opens the AI chat gets an experience grounded in that context.

How you architect the AI system that sits on top also matters enormously — we cover how we went from a monolithic LLM to a multi-agent architecture to make the semantic layer work reliably at scale.

Compare this to shipping the AI chat without a semantic layer. Every customer encounter becomes a potential failure point. The AI improvises. Some answers are right, some are wrong, and there's no consistent logic behind either. You can't fix it at scale because there's nothing systematic to fix — just a language model doing its best with raw schema.

A well-configured semantic layer turns the AI from a probabilistic guesser into a reliable product feature. That's the difference between a demo that impresses and a feature that drives retention.

In practice, this means a SaaS team with 200 customers configures the semantic layer once and all 200 customers get accurate AI responses from day one. Without that configuration, a team would need to debug AI errors customer by customer an approach that doesn't scale. The time investment in semantic layer configuration typically pays back within the first month of customer-facing deployment: fewer support tickets, higher feature adoption, and a measurable increase in dashboard engagement from end users who now trust the answers they receive.

Shipping without a semantic layer is shipping a liability

The uncomfortable truth about embedded AI analytics without a semantic layer: you're not shipping a feature, you're shipping a liability.

Wrong answers erode trust faster than no answers. An HR director who gets a wrong turnover number doesn't think "the AI made a mistake." They think "this product doesn't work." And they're right.

The AI chat in your product is only as reliable as the context you give it. A semantic layer is how you give it that context — systematically, scalably, and in a way that improves over time as you enrich the definitions.

Your customers are trusting your product to help them understand their business. That trust starts with making sure the AI actually understands their data.

When you're evaluating which platform to build on, our comparison of the best AI embedded analytics tools shows how each handles the semantic layer — it varies widely.

The semantic layer isn't optional

Embedded AI analytics is a genuine step forward for SaaS products. It gives end users a way to explore data without needing to know SQL or navigate complex dashboards. But the power of natural language queries only materializes when the AI has the context to interpret them correctly.

The semantic layer is that context. It's what separates an AI that answers from an AI that answers correctly.

Toucan is an embedded AI analytics platform built for SaaS product teams. It delivers white-label, customer-facing analytics via web component or React component, with native multi-tenant row-level security and a semantic layer that maps your database schema to business concepts automatically.

If you want to add an embedded AI analytics experience and want to see how the semantic layer configuration works in practice Try Toucan AI for 14 days

FAQ

What is a semantic layer in analytics?

A semantic layer is a translation layer that sits between a raw database and an analytics or AI system. It maps technical column names and database structures to business-friendly concepts defining what 'revenue' means, which date field represents 'this quarter', and what filters apply by default. For AI analytics, it ensures that natural language queries are resolved against correct, governed definitions rather than raw schema.

Why does embedded AI analytics need a semantic layer?

Without a semantic layer, an AI model interprets database schema literally and database schema was designed for machines, not for business conversations. The AI may return a plausible-looking number that is technically wrong: wrong date range, wrong column, wrong filter. In a customer-facing SaaS product where users don't verify outputs, this erodes trust faster than a missing feature would.

How does a semantic layer handle multi-tenant data isolation?

In a multi-tenant SaaS product, the semantic layer encodes tenant-level scoping rules. When a user from Company A queries the AI, every query is automatically filtered to that company's data enforced at the semantic layer level, not in the front-end. This means one semantic layer configuration can safely serve hundreds of tenants without each customer seeing another's data.

How long does semantic layer configuration take with Toucan?

Toucan's AI-assisted setup analyzes your connected tables and automatically generates a first version of the semantic layer mapping column names to business labels, proposing metric definitions, and suggesting default filters. A builder with domain knowledge of their product's data model can review and enrich this in a few hours. Complex data models with many custom metrics may take a day or two.

Alim Goulamhoussen

Alim is Head of Marketing at Toucan and a growth marketing expert with over 8 years of experience in the SaaS industry. Specialized in digital acquisition, conversion optimization, and scalable growth strategies, he helps businesses accelerate by combining data, content, and automation. On Toucan’s blog, Alim shares practical tips and proven strategies to help product, marketing, and sales teams turn data into actionable insights with embedded analytics. His goal: make data simple, accessible, and impactful to drive business performance.

Voir tous les articles